Firecracker vs Docker - Security Tradeoffs for Agentic Workloads

AI agents generate unpredictable code that exploits shared kernels. Firecracker microVMs provide hardware isolation at container-like speed and cost. Here's when to use each technology.

TL;DR

Your AI coding assistant just generated elegant Python code. You hit run. Three seconds later, it’s rewriting binaries on your host machine—not inside its sandbox, but on the actual host. This isn’t fiction. CVE-2019-5736 did exactly this to every major container runtime.

Here’s the problem: containers share a kernel with 40 million lines of code. One vulnerability, and every container on your platform is compromised. Traditional apps are predictable—you can audit them. AI agents autonomously generate code, recursively fork processes, experiment with memory-mapped I/O, and trigger kernel bugs your security team has never seen.

The solution? Hardware isolation. Firecracker microVMs create transistor-level boundaries that kernel exploits can’t cross. Each agent gets its own kernel instance. Boot time? Sub-125 milliseconds. Memory overhead? Under 5MB. Cost difference versus Docker? Negligible. Cost of a breach? €10-20 million in GDPR fines alone.

AWS Lambda runs millions of these in production. The technology is proven. This article shows you exactly when to use containers, when to use gVisor, and when only full VM isolation will protect you. The decision matrix inside could save your platform.

The Itch: Why This Matters Right Now

Picture this: Your AI coding assistant just generated a Python script to analyze customer data. Clean code. Elegant even. It passes your syntax checks. You hit “run.”

Three seconds later, that script has rewritten a binary on your host machine. Not inside its little sandbox. On the actual host. And the next time your container runtime spins up, it executes that modified code with root privileges. Game over.

This isn’t theoretical. This exact scenario was CVE-2019-5736, a documented exploit that worked against every major container runtime. The attack exploited a race condition in how containers handle file descriptors, allowing attackers to overwrite the container runtime binary itself and wait for the next unsuspecting developer to type “docker exec.”

Here’s what keeps security architects up at night: you’re betting your entire platform on 40 million lines of kernel code. Every container on your system shares that exact same kernel. One vulnerability, one clever exploit, one wrong syscall, and suddenly the walls between all your containers evaporate.

And with AI agents? The threat model is exponentially worse. Traditional applications are predictable—they execute what programmers specified. AI agents generate code autonomously. An LLM optimizing file I/O might recursively call os.fork() until it triggers process limit bugs in the kernel. A code optimizer experimenting with memory-mapped I/O could hit filesystem driver vulnerabilities. An agent using subprocess.Popen(shell=True) creates command injection paths that escalate to kernel exploits.

The uncomfortable truth: the security model we built for packaging applications was never designed to contain autonomous code generation.

To be clear: containers remain the right choice for trusted code in controlled environments—internal tools, CI/CD pipelines, microservices you control. The security model specifically breaks down when executing untrusted or autonomously-generated code, where a single kernel vulnerability affects all tenants. For a deeper analysis of the specific threats AI agents pose, see The Threat Model for Agentic AI: What Actually Goes Wrong.

The Deep Dive: The Struggle for a Solution

The Villain: Why the Shared Kernel Changes Everything

When you spin up a Docker container, you’re not creating anything close to a separate machine. You’re creating an elaborately decorated process on the host system. Linux namespaces give it its own view of process IDs, mount points, and network interfaces. Cgroups limit resource consumption. Seccomp filters block dangerous system calls. Capabilities restrict what root can do.

It’s an impressive security stack that Google and the Linux kernel team spent years building. They work remarkably well for what they were designed for: resource isolation and multi-tenant process management where you fundamentally trust the code.

But here’s the architectural reality that no amount of security features can overcome: all those containers are making system calls into the exact same kernel. That kernel runs in the most privileged CPU mode possible, with access to all memory, all hardware, all processes across the entire host.

The kernel contains forty million lines of complex C code managing everything from filesystems to network protocols to memory management. It’s an attack surface measured in acres. Every year, researchers discover dozens of kernel vulnerabilities—and a disturbing number are exploitable by any process that can make the right sequence of system calls.

The False Starts: When Security Features Aren’t Enough

The container ecosystem responded with layers of hardening. Default seccomp profiles now block hundreds of dangerous syscalls. AppArmor and SELinux add mandatory access controls. User namespaces let containers run as “root” inside without being root on the host. This is defense in depth: multiple layers where even if one breaks, the others hold.

And it works—until it doesn’t.

CVE-2019-5736 bypassed all of it. The vulnerability existed in how runc handled file descriptors during “docker exec.” A malicious process inside the container could grab a file descriptor to /proc/self/exe—pointing back to the runc binary on the host. Write to that descriptor, and you’re modifying the host’s runc binary. Next container operation, that modified binary executes with host root privileges. All the namespaces, capabilities, and seccomp filters? Irrelevant.

The 2024 “Leaky Vessels” vulnerability (CVE-2024-21626) was even more insidious, triggering during image builds rather than runtime. Craft a malicious Dockerfile with specific WORKDIR instructions, and you could write to arbitrary host paths during docker build. Compromised images on public registries became weaponized. Pull and build, and your host was owned—no container execution required.

Cgroup escape vulnerabilities (CVE-2022-0492) let containers execute commands on the host through the release_agent mechanism. Kernel memory corruption bugs (CVE-2022-0185) in filesystem handling provide privilege escalation paths. These score 7.8 to 8.6 on CVSS and get actively exploited.

Here’s the sobering part: While Kubernetes introduced the SeccompDefault feature gate in v1.22 to enable runtime default seccomp profiles, standard Kubernetes distributions still ship with seccomp in “Unconfined” mode by default as of early 2025 for backward compatibility. Some managed services like GKE Autopilot enable it automatically, but many production clusters—particularly self-managed deployments and GKE Standard clusters—run without this protection unless explicitly configured. That means kernel vulnerabilities that seccomp would block remain exploitable.

The Breakthrough: Hardware Isolation as the Security Boundary

Amazon’s Firecracker team asked a different question: What if we didn’t share the kernel at all?

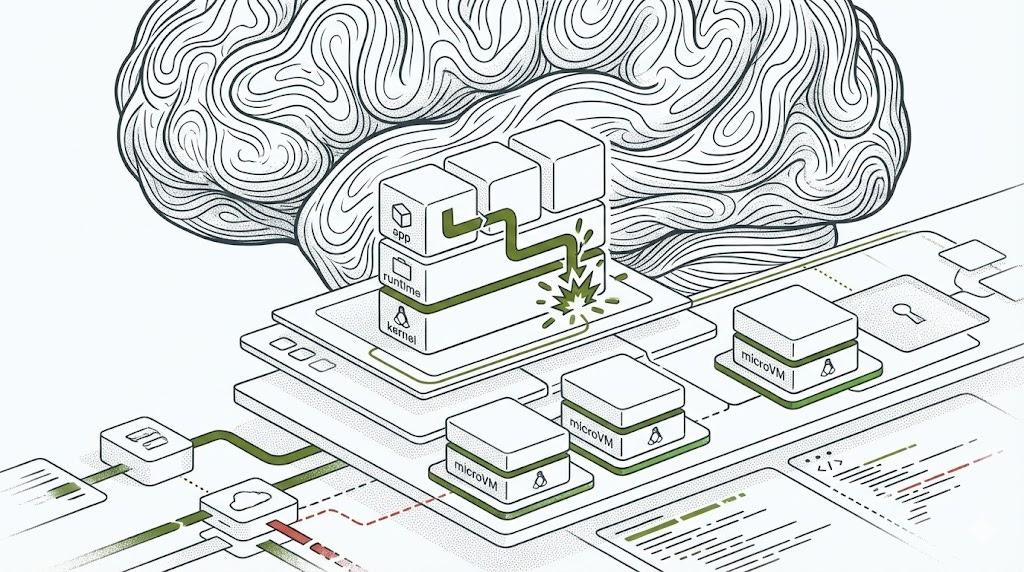

Firecracker is a virtual machine monitor, but nothing like traditional VMs. Instead of emulating complete computers with BIOS, firmware, and dozens of legacy devices, Firecracker strips to bare essence.

Written in fifty thousand lines of Rust—a memory-safe language where entire vulnerability classes can’t exist—it emulates exactly five devices: network, storage, a communication channel, a serial console, and a one-button keyboard for shutdown. No USB. No floppy drives. No sound cards. No graphics. Every unimplemented device is an eliminated vulnerability class.

The security model has two concentric rings. The outer ring is the CPU itself. Modern processors (Intel VT-x, AMD-V) create a hardware boundary between host and guest code. The guest kernel runs in a non-root CPU mode where it literally cannot access host memory or other VMs. This isn’t software policy with potential bugs—this is the processor enforcing isolation at the transistor level.

The inner ring is the “jailer,” which sandboxes each Firecracker VMM process. Even if an attacker escaped the hardware virtualization boundary—requiring a CPU hardware bug or KVM vulnerability—they’d still be trapped inside a chroot jail with no network access, no view of other host processes, and restricted to exactly 24 system calls through a seccomp filter.

Critically: Firecracker runs one VMM process per microVM, not a single daemon managing all VMs. Each agent workload gets its own isolated Firecracker process, which improves both security (compromise of one VMM doesn’t affect others) and operational simplicity (no complex multi-tenant daemon to orchestrate).

Each microVM gets its own kernel. If that kernel has vulnerabilities, they only affect that one VM. A successful kernel exploit inside one agent’s VM gives you root inside that VM—but you’re still trapped behind the hardware boundary. You’d need a separate hypervisor escape to touch the host or other workloads.

Boot performance makes this practical for real-time agent workloads. Traditional VMs take seconds because they emulate firmware initialization and device discovery. Firecracker skips all that. A minimalist Linux kernel loads directly. No BIOS. No POST. Sub-125-millisecond cold starts become routine.

The Middle Ground: When Full VMs Are Too Much

Google’s gVisor reimplements the Linux kernel in user space. The “Sentry”—a Go program—intercepts every system call from containerized applications. Instead of reaching the host kernel, the Sentry handles them using its own Linux kernel subsystem implementations.

When your AI agent tries to open a file, the syscall traps into the Sentry’s virtual filesystem. For actual disk reads, a separate “Gofer” process performs host filesystem operations with minimal privileges. The attack path now requires exploiting both the Go-based Sentry and the host kernel—two completely different codebases.

The tradeoff? System call overhead. Every syscall bounces through user space. For CPU-bound workloads like LLM inference, overhead is negligible. For syscall-heavy operations, file operations can be 100-200% slower in worst cases.

Kata Containers took the opposite path: VM-level isolation with zero workflow changes. They wrap Firecracker, QEMU, or other hypervisors behind the standard container runtime interface. To Kubernetes, it looks like regular containers. Under the hood, each pod runs in its own VM with hardware isolation.

The cost is higher overhead—500 milliseconds to start and 125 megabytes of memory per VM compared to Firecracker’s sub-5-megabyte footprint. But you get drop-in compatibility. Change one line in your Kubernetes manifest to specify the Kata runtime class, and untrusted agent workloads get VM isolation while trusted services keep running in efficient containers. Note that “drop-in” still requires installing kata-runtime, configuring RuntimeClass resources, and validating CNI plugin compatibility—easier than custom Firecracker orchestration, but not zero-effort.

The Operational Reality Check

Moving from Docker to microVM isolation affects your entire workflow.

Debugging: Docker’s docker exec and docker logs give instant access. Firecracker requires console access to the VM’s serial port, kernel logs through virtio-serial, or SSH into the guest. The benefit? Container failures can cascade across the host through resource exhaustion or kernel panics. Firecracker failures are contained—a crashed VM affects only that workload.

Networking: Docker’s bridge networking is straightforward. Each Firecracker microVM needs its own tap device and network namespace configuration. Service meshes may require adapter layers. The upside: true network boundaries enforced by the hypervisor, not iptables rules circumventable by kernel exploits.

Image Build Pipelines: Docker images work everywhere Docker runs. Firecracker requires guest kernels (stripped to 5-10 megabytes) and minimal rootfs images (50 megabytes or less). Higher upfront effort, but better reproducibility.

Monitoring: Container metrics flow through Docker daemon or containerd. Firecracker microVMs expose metrics through their API endpoint. Integration requires custom exporters. The benefit: hardware-level resource isolation with no kernel accounting ambiguity.

These operational challenges are solvable. AWS Lambda and Google Cloud Run manage millions of microVMs in production. Open-source tools like firecracker-containerd and kata-containers provide integration layers.

The Resolution: Your New Superpower

Here’s what the performance numbers actually mean for your infrastructure budget.

Capacity Analysis (80GB Host):

For minimal workloads running Python runtime and basic dependencies:

Docker containers: 40MB per container → 80GB ÷ 40MB = ~2,000 containers

Kata Containers: 165MB per VM (125MB base + 40MB app) → 80GB ÷ 165MB = ~485 VMs

Firecracker microVMs: 45MB per microVM (<5MB VMM + 40MB app) → 80GB ÷ 45MB = ~1,778 microVMs

The density difference between Firecracker and Docker is negligible for minimal workloads. For AI agents requiring larger memory allocations (512MB for model serving, 2GB for training), the VMM overhead becomes proportionally smaller—isolation cost remains constant while application memory dominates.

Cost Analysis:

For a platform running 1,000 concurrent agent sessions on c5.metal instances ($4.08/hour in us-east-1):

Docker: ~2,000 containers per host → 1 instance = $2,978/month

Firecracker: ~1,778 microVMs per host → 1 instance = $2,978/month (essentially identical)

Kata Containers: ~485 VMs per host → 3 instances = $8,935/month (3x cost)

Compare infrastructure costs to breach exposure: GDPR fines average €10-20 million, plus reputation damage and customer churn. Even a 3x infrastructure increase for Kata is negligible compared to breach risk. Firecracker provides VM-level isolation at container-equivalent economics.

Performance Overhead:

Firecracker achieves >95% of bare-metal CPU performance. The isolation boundary adds 125 milliseconds at startup—imperceptible compared to LLM inference times measured in seconds.

I/O Performance:

Sequential I/O (large files, datasets):

Docker: ~100% bare metal

gVisor: 30-50% overhead

Firecracker: 3-11% overhead

Random I/O (databases, many small files):

Docker: ~100% bare metal

gVisor: 100-200% overhead

Firecracker: 10-20% overhead

Network throughput (APIs, streaming):

Docker: ~100% bare metal

gVisor: 15-25% overhead

Firecracker: 5-10% overhead (scales to 10Gbps+ in production)

For AI agent workloads:

Code generation agents (low I/O, CPU-bound): All approaches perform well

Data analysis agents (heavy file I/O): Firecracker preferred for throughput

API integration agents (network-heavy): Minimal difference across options

The Decision Matrix: Choosing Your Isolation Model

DOCKER

Threat Model Fit: Trusted code in controlled environments. Security depends on kernel hardening, seccomp, namespace isolation. Vulnerable to container escape CVEs affecting shared kernel.

Performance Characteristics:

Startup: <100ms

Memory: 5-50MB base

CPU: ~100% bare metal

I/O: ~100% bare metal

Operational Complexity: 2/5 - Familiar tooling, extensive ecosystem, straightforward debugging and networking

Ideal Use Cases:

Internal development tools

CI/CD build agents

Microservices with trusted code

High-density workloads where security threats are minimal

GVISOR

Threat Model Fit: Moderate threat environments. Protects against most kernel exploits by intercepting syscalls in userspace. Two-layer defense requires exploiting both Sentry and host kernel.

Performance Characteristics:

Startup: 100-200ms

Memory: 10-80MB overhead

CPU: 95-98% bare metal

I/O: 50-85% of bare metal (syscall-dependent)

Operational Complexity: 3/5 - Requires runsc runtime configuration, some debugging complexity, compatible with standard container tooling

Ideal Use Cases:

Customer-facing APIs executing untrusted code

Multi-tenant SaaS platforms

Sandboxed execution environments

Workloads needing better-than-container isolation without full VM overhead

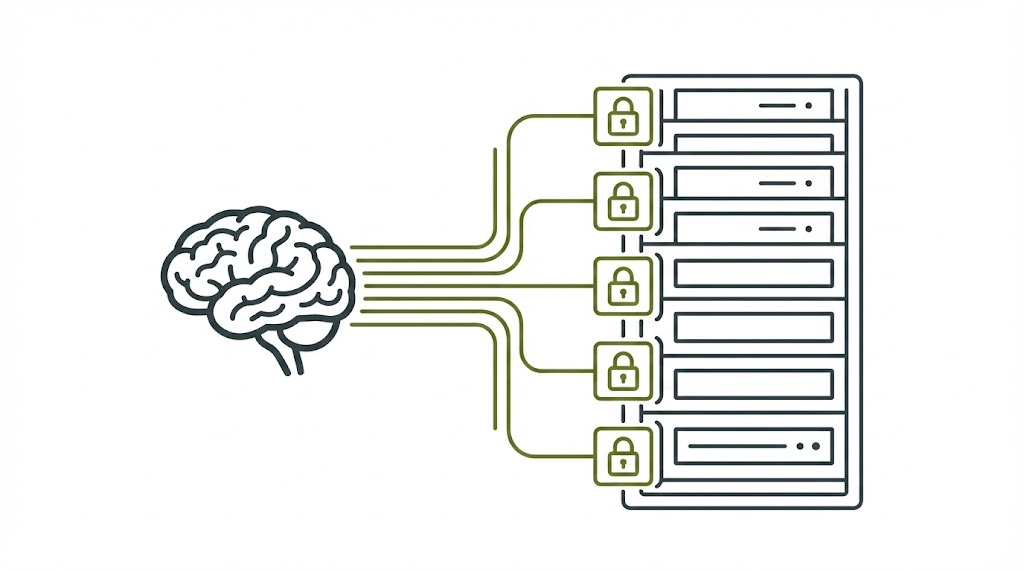

FIRECRACKER

Threat Model Fit: High-threat environments with untrusted or autonomous code generation. Hardware-enforced VM isolation protects against all kernel-level exploits. Each workload has dedicated kernel instance.

Performance Characteristics:

Startup: <125ms

Memory: <5MB VMM overhead

CPU: >95% bare metal

I/O: 90-97% of bare metal

Operational Complexity: 4/5 - Custom orchestration required, kernel/rootfs image management, tap device networking, different debugging workflow

Ideal Use Cases:

AI agents generating arbitrary code

Serverless function platforms (AWS Lambda model)

Zero-trust multi-tenant execution

Workloads processing sensitive data requiring regulatory compliance

KATA CONTAINERS

Threat Model Fit: High-threat environments requiring VM isolation with minimal workflow changes. Same security model as Firecracker but designed for Kubernetes compatibility.

Performance Characteristics:

Startup: 300-500ms

Memory: 125MB base + application

CPU: 93-95% bare metal

I/O: 85-90% of bare metal

Operational Complexity: 3.5/5 - Requires kata-runtime installation, RuntimeClass configuration, CNI validation. Drop-in for K8s but not zero-effort.

Ideal Use Cases:

Kubernetes clusters with mixed trust workloads

Gradual migration from containers to VM isolation

Teams wanting VM security within Kubernetes ecosystem

Environments where 125MB overhead per workload is acceptable

How to Use This Matrix:

Assess your threat model: What’s the damage if isolation fails? Complete customer data breach and regulatory violations demand hardware isolation (Firecracker/Kata).

Evaluate performance requirements: Strict latency SLAs? I/O-intensive workloads? gVisor’s syscall overhead may not work for database-backed agents; Firecracker handles this better.

Consider operational maturity: Small teams might find Kata’s Kubernetes compatibility easier than building custom Firecracker orchestration. Larger organizations with platform teams can optimize Firecracker deployments.

The Future Outlook

As AI agents become more autonomous, security requirements intensify. Today’s agents mostly run constrained code against APIs. Tomorrow’s agents will have shell access, make system-level calls, and operate with increasing independence.

The isolation technology exists now. It performs well. It costs little compared to breach risk. The decision isn’t whether to adopt stronger isolation—the CVE history and cloud provider deployments make that case definitively. The decision is which isolation approach fits your operational constraints and performance requirements.

Starting with VM-level isolation isn’t paranoia—it’s basic security hygiene based on demonstrated attack patterns and known kernel vulnerability rates. Container escapes aren’t theoretical—they happen regularly, score highly on CVSS, and affect all tenants on a platform.

Your AI agents are generating code you didn’t write, making decisions you didn’t anticipate, and exploring solution spaces you can’t predict. The shared kernel model containers provide is fundamentally incompatible with that threat model.

The engineering is proven. The overhead is acceptable. The alternative—discovering your isolation was inadequate after a breach—is unacceptable.

Choose accordingly.

Peace. Stay curious! End of transmission.

Other articles I wrote that relate to the topic:

References

AWS Firecracker Team - “Firecracker: Lightweight Virtualization for Serverless Applications”

USENIX NSDI 2020

https://www.usenix.org/system/files/nsdi20-paper-agache.pdf

DOI: 10.5555/3388242.3388287Google gVisor Team - “gVisor Architecture Guide: Security Model”

https://gvisor.dev/docs/architecture_guide/security/Kata Containers Project - “Kata Containers Architecture”

https://github.com/kata-containers/kata-containers/blob/main/docs/design/architecture/README.mdLiz Rice - “Container Security: Fundamental Technology Concepts that Protect Containerized Applications”

O’Reilly Media, 2020

ISBN: 978-1492056706

https://www.oreilly.com/library/view/container-security/9781492056690/National Vulnerability Database - Container Escape CVEs:

CVE-2019-5736 (runc container escape): https://nvd.nist.gov/vuln/detail/CVE-2019-5736

CVE-2024-21626 (Leaky Vessels): https://nvd.nist.gov/vuln/detail/CVE-2024-21626

CVE-2022-0185 (heap overflow in filesystem): https://nvd.nist.gov/vuln/detail/CVE-2022-0185

CVE-2022-0492 (cgroup release_agent escape): https://nvd.nist.gov/vuln/detail/CVE-2022-0492

Firecracker Specification and Performance Benchmarks

https://github.com/firecracker-microvm/firecracker/blob/main/SPECIFICATION.mdKubernetes SeccompDefault Feature Documentation

https://kubernetes.io/blog/2021/08/25/seccomp-default/